What kind of ML approach works best for finding Product Qualified Leads?

Are you working at a SaaS company that is trying to better prioritize leads or accounts to work on? And are you hoping usage data can help with that?

Here are a few common situations our customers find themselves in:

- They want to know which free trial users to focus on converting

- They want to know who they should upsell or cross-sell to

- They want to identify churn risks proactively

In all these situations, usage data come in handy. We’ve written about why PQLs perform well here. But the short of it is – how your users interact with your product is often a stronger intent signal than whether they watched your webinar or what their job title is.

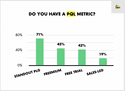

If you don’t believe me, take a look at Openview’s survey. It shows that the best performing companies use PQLs more than other companies:

Why everyone is using analytics to find PQLs

Dropbox was one of the first SaaS companies to heavily rely on usage data. They dedicated a team of data scientists to find the usage metrics that predicted when a free user was likely to become a paying customer.

So why are the best companies taking a data-driven approach to finding these usage-based PQL signals?

Too many options for a human to pick from

Scoring MQLs is relatively simple compared to PQLs. You often have a list of 10-20 factors to pick from, such as:

- Marketing data (Did this lead attend our webinar, or read our blog?)

- Firmographic data (e.g., size of company, industry)

- Demographic data (e.g., job title, seniority)

Once you throw product usage data into the mix, finding the right criteria for what counts as a PQL becomes much more complex:

- Even ‘simple’ products like Zoom have dozens of features (e.g., calling, polls, number of attendees added)

- There are many possible metrics for each feature (is it the length of a Zoom call, or the frequency of a Zoom call that matters?)

- Each metric can have many variations (even if it’s the length of a Zoom call that’s critical, should we measure the average length per week, or a week over week change?)

ML allows us to scan through all permutations of usage metrics AND firmographic or demographic attributes.

We can then find the exact combo that predicts a conversion, upsell, or churn.

Not all ML approaches are born equal

Lots of tools promise you some form of automated analysis for your leads. Even some legacy CS tools from ten years ago had automated ‘health scores’.

At HeadsUp, we spoke with lots of PLG companies, and identified what distinguishes the ML approach taken by the companies that had the best-performing PQLs.

We’ve narrowed it down to 4 elements.

1. ML models that are customized for GTM

Go-to-market problems have specific nuances. The best ML models are tuned to accommodate for them.

One example is seasonality. Most GTM leaders understand that customers behave differently across the year. Q1 differs from Q4, and start of quarter and end of quarter also has different dynamics.

One of our customers found that usage patterns for their product varied during times of the year. Our model was built to detect this, and proposed slightly different PQLs for different time periods.

For example, during quiet months, a small growth in usage can be as strong an intent signal as large spikes during peak season.

Other nuances we take into account include geography. A good model should check if different territories should have different PQL definitions.

2. Using a different model for each objective

Many teams and products adopt a one-size-fits-all approach. They have a simple ML model that just looks for correlated signals for any GTM objective, whether you are trying to detect churn or find upsell opportunities.

However, the right approach would be to train a new model for each objective. Examples can include:

- Different models for cross-selling different products

- Upgrading from free to premium, vs premium to enterprise

- Finding accounts that should be nudged towards activation

- Detecting churn from different product offerings or pricing tiers

3. Constant re-training

The behavior and intent of your users change quickly.

Changes can be caused by new features being released within your product, shifts in the competitive landscape, or even the macro-economic environment.

We update our models weekly to take into account these changes.

Many teams find this difficult, because data science resources are constrained, and updating PQLs requires going back-and-forth between multiple teams and tools.

4. Retaining a high degree of human input

The best PQLs aren’t fully automated by analytics.

Here are a few examples of how input and tweaking from humans improves PQL accuracy:

- Including or excluding accounts. Certain accounts have unusual usage patterns. It might be because they have a strange use case, or they are not exactly within your ICP (Ideal Customer Profile). On the other hand, some accounts might be extra important. Human input helps to weight these accounts appropriately for the ML model.

- Giving feedback and measuring the performance of the ML model. Few PQL models are perfect on the first go. The feedback from reps and CSMs is critical. Our customers often tell us if a certain iteration of the PQL is working well, and if we are missing certain signals that they think are strong predictors.

Implementing the right ML approach is easier said than done. Most companies don’t get there because it requires setting up the right processes, having enough data science resources, and building internal tooling.

That’s why our ML-powered platform comes in handy for many SaaS companies. We do all the tedious work so that they can get results in days, instead of months.

To get access, just shoot me a note at momo@headsup.ai.